Hidden Biases in Curriculum Selection and Their Impact

28 min read How hidden biases shape curriculum selection, skew student outcomes, and practical ways to audit, diversify, and monitor materials for equitable learning across K–12 and higher education. (0 Reviews)

The quietest decisions in schools are often the most consequential. When a district adopts a new science series or a department chooses which novels anchor ninth-grade English, it is selecting not just content but worldviews, language registers, and implied audiences. Most of the time, these choices are made with good intentions and under intense constraints—budgets, timelines, and test alignment. Yet even well-meaning selections can carry hidden biases that subtly skew whose knowledge is centered, which skills are privileged, and who recognizes themselves as a full participant in learning. Seeing those biases—and designing against them—is now a core professional responsibility.

What “hidden bias” in curriculum really looks like

Hidden bias is rarely one overtly exclusionary passage; it’s an accumulation of small choices that add up to unequal access and belonging. Common forms include:

- Representation bias. Who appears as authors, scientists, protagonists, and historical actors? In many textbook audits, men outnumber women in named examples by wide margins, sometimes three to one or more, and racial/ethnic minorities are underrepresented or appear only in limited roles (e.g., athletes, activists) rather than across professions.

- Omission bias. Entire lenses are left out. A U.S. history course that gives one paragraph to Reconstruction or avoids redlining while spending weeks on presidential timelines omits structural forces shaping present-day life.

- Framing bias. The language used to describe the same event can shift blame or agency. For instance, describing “settlers moving into open land” versus “settlers displacing Indigenous nations” changes moral clarity and historical accuracy.

- Linguistic load bias. Materials assume advanced background vocabulary or dense syntax that exceeds students’ current proficiency, particularly for multilingual learners, without supports—creating a comprehension tax that is not about ability but access.

- Cultural relevance bias. Word problems about ski lodges and sailboats or essays about experiences far outside students’ daily lives can disadvantage those with different funds of knowledge; decoding context becomes a prerequisite to doing the math or analysis.

- Sequencing bias. Courses that assume prior exposure to concepts (e.g., algebraic thinking before algebra) can quietly penalize students who did not get that exposure—often those in historically under-resourced schools.

- Assessment alignment bias. When adopted materials prepare students for certain item types (say, multiple-choice recall) while high-stakes exams demand text-based analysis or multi-step problem solving, the misalignment can depress scores for students who rely on the official curriculum the most.

Consider a concrete example: An 8th-grade science unit features inventors of electrical technology. If the unit showcases only Edison and Tesla, it implicitly suggests innovation is Western and male. Adding Granville T. Woods (an African American inventor) or Hertha Ayrton (a pioneering British engineer) is not tokenism; it’s accuracy—and it quietly expands who can see themselves as scientists.

How bias sneaks into adoption decisions

Bias rarely enters with a stamp; it arrives by habit.

- Familiarity bias. Reviewers gravitate to what they’ve taught before, even if new evidence suggests better-aligned materials exist. “I know how to teach this one” becomes the trump card.

- Authority bias. A charismatic vendor or a neighboring district’s endorsement can overshadow local needs analysis.

- Risk aversion. Committees anticipate controversy and “play it safe” by selecting bland, decontextualized materials—ironically entrenching omission bias and depriving students of rigorous, relevant content.

- Test anchoring. In accountability-heavy environments, committees over-index on item-format alignment rather than on the quality of disciplinary thinking, literacy supports, or cultural responsiveness.

- Procurement constraints. Some districts require multi-year, single-vendor adoptions, shrinking options and making it harder to supplement where gaps emerge.

- Time pressure. Pilot windows collapse; teachers preview units over a weekend; administrators rely on glossy overviews instead of lived trials in varied classrooms.

Imagine a mid-size district choosing a grade 6–8 ELA program. Two vendors present. Vendor A offers strong text complexity progression and a test-aligned practice bank but thin representation and limited teacher guidance for multilingual learners. Vendor B offers robust supports for academic language and diverse anchor texts but fewer online test-prep items. Under deadline and state-pressure to raise scores, the committee chooses A. In the short term, practice scores tick up; in the long term, reading growth plateaus among multilingual learners, and reading stamina lags because students rarely see texts that resonate. The decision “worked”—until you widen the lens on who it worked for and for how long.

Why it matters: impacts on learning, identity, and opportunity

Hidden curricular biases are not abstract moral concerns; they translate into measurable differences.

- Motivation and persistence. Students are more likely to persist with challenging tasks when they find personal relevance or see themselves in the material. That is not about pandering; it’s about tapping prior knowledge to enable transfer.

- The “Matthew effect” in reading. Early accessibility matters. Materials that are consistently too hard in language or too thin in background knowledge can widen gaps: strong readers read more and grow faster, while others disengage and fall further behind.

- Identity safety and stereotype threat. When examples repeatedly position certain groups as central contributors and others as peripheral, students may experience subtle identity threats that sap working memory and performance under pressure.

- Skill pathways and course-taking. Biased sequences channel students. For example, tracking practices combined with curricula that assume out-of-school enrichment can suppress entry into Algebra I by 8th grade for students from under-resourced schools—cascading into fewer AP STEM opportunities later.

- Civic literacy. Omission and framing biases in social studies narrow students’ capacity to analyze contemporary policy debates with historical nuance, weakening civic participation.

A tangible illustration: A high school “World Literature” course includes Homer, Shakespeare, Dostoevsky, and Kafka. All are worthy. But if this is the main arc, students may leave with a view that world literature is European. When Chinua Achebe, Rabindranath Tagore, Sor Juana Inés de la Cruz, Naguib Mahfouz, or contemporary voices like Chimamanda Ngozi Adichie or Haruki Murakami enter the arc, students encounter a more accurate literary landscape—and more ways into complex themes.

A step-by-step audit for curriculum bias

You don’t need a PhD or a year-long study to surface hidden bias. Build an audit that’s rigorous enough to matter and light enough to execute.

- Clarify goals in writing.

- Define your equity aims: representation, linguistic access, disciplinary rigor, local relevance, and assessment alignment.

- Weight each aim (e.g., 25% representation, 25% rigor, 20% language supports, 15% relevance, 15% alignment).

- Run a representation inventory.

- Count named individuals in anchor texts, images, and extended examples. Disaggregate by gender, race/ethnicity where discernible, geography, and profession.

- Compare ratios to local demographics and to subject-accurate distributions (e.g., women’s representation in contemporary biology).

- Note roles: Are underrepresented groups shown primarily as victims or as agents and creators?

- Analyze framing and perspective.

- For history and literature, identify whose viewpoint is centered. Is there corroboration from primary sources representing multiple sides?

- Flag euphemistic language (e.g., “laborers were brought” vs. “people were trafficked and enslaved”).

- Check the presence of counter-narratives or historiographical debates at developmentally appropriate levels.

- Evaluate linguistic load and supports.

- Use readability measures (e.g., sentence length, academic vocabulary density) as a starting point, not an endpoint.

- Audit scaffolds: glossaries, sentence frames, visuals, multilingual adaptations, and opportunities for oracy.

- Look for strategic translation or bilingual supports where local policy permits.

- Map conceptual sequencing.

- Chart prerequisite knowledge assumed by each unit. If a 7th-grade math unit requires fluent manipulation of ratios introduced inconsistently in grade 6, flag it.

- Check spiral review: Is important content revisited with increasing sophistication, or is it “one and done”?

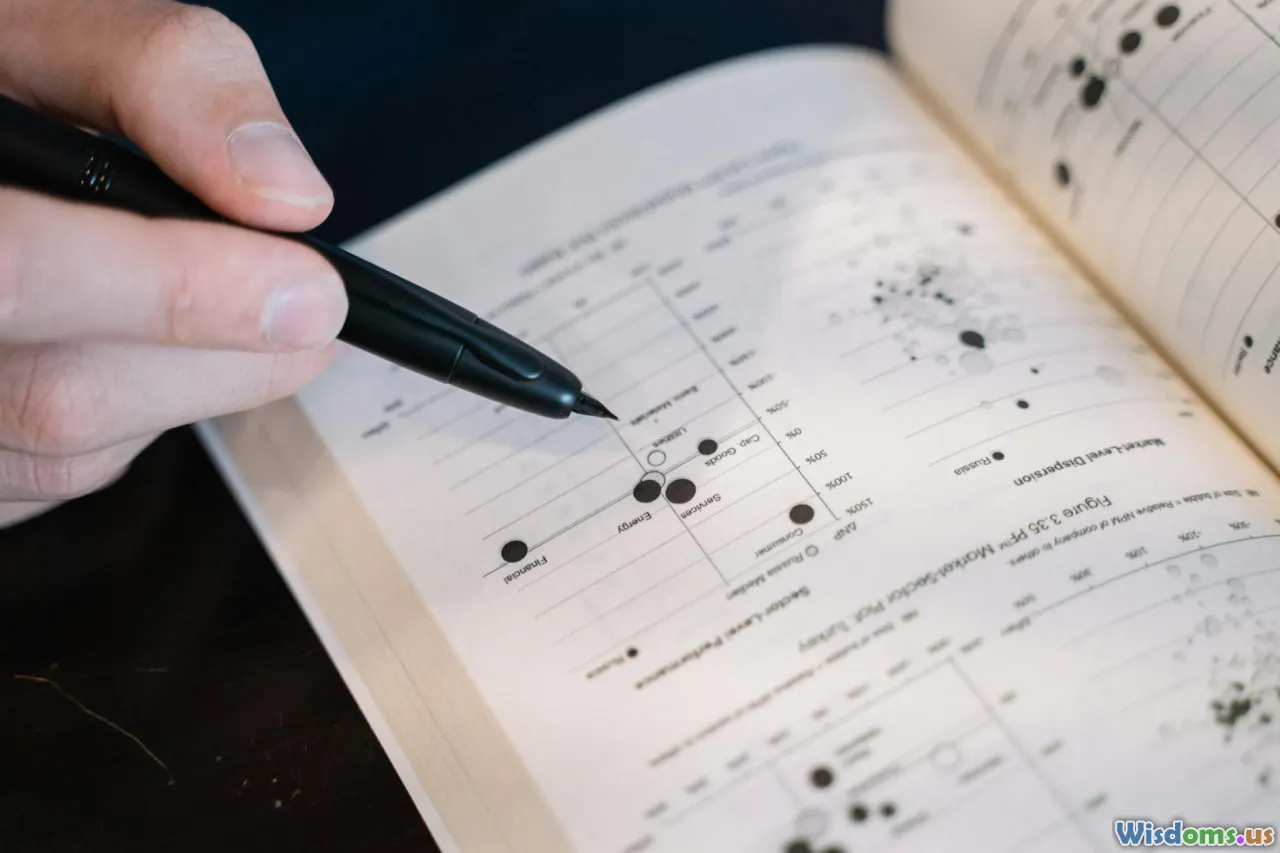

- Align with assessments without narrowing.

- Ensure key standards and item types are covered, but also analyze whether tasks demand disciplinary thinking (argument from evidence, modeling, sourcing).

- Pilot sample assessments with diverse student groups and examine differential item functioning—do certain groups consistently underperform on particular item types?

- Score and synthesize.

- Use a rubric (e.g., 1–4 scale per domain). Require evidence notes, not just numbers.

- Generate a one-page executive summary with strengths, risks, and recommended mitigations.

A quick example: In an elementary social studies unit, you count 42 named figures; 36 are male, 6 female; 40 are White, 2 people of color; 0 Indigenous leaders. The materials include two paragraphs on Japanese American incarceration during WWII but frame it as “wartime relocation” with no primary-source voices. Language supports are thin. Even if the pacing and activities are engaging, the audit reveals deep revision or supplementation is necessary.

Short case studies: where selection helped—or hurt

-

Case 1: Algebra with sports-only contexts. A district adopted a math series with engaging but narrow contexts (baseball averages, football yardage). In schools where many students had little interest in or exposure to these sports, engagement slumped. Teachers introduced alternative contexts—music production decibel levels, cooking ratios, public transit scheduling—and saw problem completion and discourse uptick, particularly among girls and multilingual learners.

-

Case 2: The “classic novels only” policy. A high school required a fixed list of mid-century American and British novels. AP pass rates were solid, but 9th- and 10th-grade failure rates were high, especially among boys of color. After a revision allowing contemporary, thematically rigorous pairings (e.g., Gatsby with Between the World and Me; Macbeth with Fences), overall completion of long-form reading rose, and discipline referrals during ELA decreased—suggesting engagement impacts climate.

-

Case 3: Science texts with passive voice and no visuals. A middle school science program leaned heavily on dense expository text. Teachers documented that English learners scored 25–30% lower on end-of-unit quizzes than peers with similar math scores. After supplementing with visual models, graphic organizers, and structured academic talk, the gap narrowed substantially within a semester—evidence that the issue was access, not aptitude.

-

Case 4: Local history erased. An urban district’s elementary program celebrated pioneer narratives but omitted the city’s own civil rights movements and labor history. Teachers collaborated with a community museum to co-create a mini-unit with primary sources and neighborhood walking tours. Parent participation in culminating exhibitions doubled compared to previous years, indicating stronger home-school connections.

Centralized adoptions vs. teacher curation vs. OER: bias trade-offs

Different selection models present distinct bias profiles.

-

Centralized adoption (single program, districtwide)

- Pros: Coherence across schools; economies of scale; easier professional development; clearer assessment alignment.

- Bias risks: Monoculture. If the chosen program has gaps, everyone inherits them. Supplements require system-level coordination.

-

Teacher-led curation (site or department autonomy)

- Pros: Responsiveness to local context; teacher ownership; rapid iteration.

- Bias risks: Inequity across classrooms; reliance on personal networks; risk of unvetted materials; implicit bias of individual curators may go unchecked.

-

Open Educational Resources (OER) and remixing

- Pros: Flexibility, cost savings, and ability to localize content; public transparency enables community review; communities of practice can iterate quickly.

- Bias risks: Variable quality; version sprawl; the loudest contributors shape direction; sustainability without funding.

A pragmatic hybrid is common: adopt a high-quality core, then codify a vetted bank of local supplements and OER modules to address representation, relevance, and language support gaps—so teachers don’t have to reinvent the wheel, but the wheel still fits your road.

Practical selection protocol for committees

A sound process reduces the chance that bias hides in the margins.

-

Establish a north star. Publish the selection values: rigorous learning for all, cultural and historical accuracy, language access, and local relevance. Put them on every agenda.

-

Diversify the decision-makers. Include teachers from different grade bands, multilingual and special education specialists, students, families, and community voices. If your student body is 60% multilingual learners but your committee has none, the process itself is biased.

-

Define must-haves and red lines. For instance: multilingual glossaries, authentic primary sources for history, explicit writing instruction, and disciplinary practices (argumentation, modeling, problem solving). Red lines: erasure of local histories, stereotypes, unfounded claims, or lack of accessibility supports.

-

Require vendor equity addenda. Ask publishers for representation data, readability rationales, scaffolding maps, and evidence of community field testing. If a vendor cannot provide this, score accordingly.

-

Pilot with diverse classrooms. Run 6–9 week pilots across different school contexts. Collect student work, quick formative data, and perception surveys disaggregated by subgroup.

-

Conduct the bias audit in parallel. Don’t assume pilot success equals bias-free. Use the audit rubric described above; crosswalk findings with pilot data.

-

Plan for supplementation. No core is perfect. Build a shared supplemental bank before final adoption and schedule PD on using it.

-

Decide transparently. Publish a one-page rationale with evidence, including equity trade-offs and mitigation plans. Invite public comment before final board action.

-

Monitor and iterate. Set 6-, 12-, and 24-month check-ins with data reviews and updated supplements.

Tools, checklists, and data you can actually use

- Representation counter template. A simple spreadsheet with columns for name, role, identity markers (as discernible from text), profession, and context; auto-generates ratios.

- Language analysis. Use readability checkers and text analyzers to estimate sentence complexity and vocabulary frequency. Pair data with teacher judgment about conceptual load.

- Diversity style guides. Resources that flag biased terms or suggest more precise phrasing help weed out euphemisms and stereotypes.

- Rubrics for disciplinary practices. Check whether tasks demand sourcing in history, modeling in science, proof and reasoning in math, and rhetorical moves in ELA.

- Community asset map. Document local histories, industries, cultural institutions, and languages; use this to judge relevance and to source authentic local examples.

- Student perception surveys. Short, recurring items: “I see people like me represented in this course,” “I can use what I know from home/community to help me learn here,” and “The examples make sense to me.”

- Observation look-fors. Quick tools for coaches: evidence of multiple perspectives, language supports in use, equitable discussion structures (e.g., who speaks and how often).

Communicating choices to stakeholders without a culture war

In polarized times, curriculum changes can spark conflict. Proactive communication lowers the temperature.

- Start with purpose. Frame changes around learning goals: accuracy, rigor, and access for all students. Lead with student work and outcomes, not politics.

- Share the process. Publish timelines, committee composition, scoring rubrics, and pilot results.

- Provide side-by-side examples. Show a before-and-after lesson to illustrate gains in clarity or inclusion. People argue less with concrete artifacts.

- Offer opt-ins where appropriate. For sensitive texts, offer alternative selections that meet the same standards and skills without stigmatizing choices.

- Train messengers. Equip principals and teachers with talking points and FAQs grounded in the selection values and evidence.

- Keep channels open. Hold listening sessions and office hours; respond to concerns with references to the published rubric and data.

A sample impact brief might feature: a two-page executive summary, a one-page equity audit snapshot, three student work samples, and a Q&A addressing top-five community questions.

Policy and compliance guardrails to respect

- State standards and district policy. Ensure selected materials cover required standards and align to board-approved instructional frameworks and timelines.

- Accessibility laws. Provide accommodations and accessible formats (e.g., screen-reader compatible PDFs, captions, alt text) to meet legal obligations and learning needs.

- Privacy and data. Vet digital platforms for student data privacy compliance before adoption.

- Intellectual freedom and parental rights. Establish transparent procedures for text challenges and alternative assignments consistent with policy, applied fairly and consistently.

- Procurement regulations. Follow competitive bidding and conflict-of-interest rules; document scoring and rationales to safeguard integrity and trust.

Compliance is not a ceiling; it is the floor. Equity-centered selection goes beyond minimal requirements to ensure students experience accuracy, dignity, and challenge.

Metrics that show impact—beyond test scores

Track early signals and long-run shifts.

-

Access and completion

- Reading volume: minutes/pages read per week by subgroup.

- Assignment completion rates on core tasks in each unit.

-

Engagement and climate

- Student-reported relevance and belonging (survey scales).

- Discussion equity: proportion of students speaking at least once per class.

-

Learning growth

- Interim assessment growth by standard cluster, disaggregated.

- Writing rubric growth in argumentation and evidence use.

- Science/Math task performance on modeling, reasoning, and problem solving.

-

Opportunity pathways

- Enrollment in advanced math/ELA tracks by subgroup.

- AP/IB course-taking and exam participation rates.

-

Discipline and attendance

- Office referrals during core classes.

- Course-specific attendance patterns, especially on text-heavy days.

Build a simple dashboard that updates quarterly. Pair numbers with qualitative artifacts: student reflections, annotated work samples, and teacher notes from classroom observations.

What teachers can do tomorrow if the adopted curriculum is biased

Even when the system hasn’t yet shifted, classroom-level moves matter.

- Add mirrors and windows. Introduce short supplemental texts, examples, and problems that reflect your students and open new vistas. A two-page profile of a local engineer can reframe a physics unit.

- Context-swap word problems. Keep the mathematics, change the scenario. Replace “yachts in a marina” with “buses on a route” or “battery charge cycles” to fit students’ experiences.

- Pre-teach and translate key terms. Identify Tier 2 and Tier 3 vocabulary; provide bilingual glossaries, visuals, and sentence frames. Use structured talk routines like “Say It Again Better” or “Think-Pair-Share” to build academic language.

- Source multiple perspectives. For a history topic, add one primary source from a marginalized perspective and require sourcing and corroboration. Ask: Who is speaking? How do we know?

- Invite expertise from the community. A parent working in logistics can illuminate systems thinking in math; a local elder can enrich an immigration story.

- Normalize critique. Tell students, “Curricula are made by people; we evaluate them like any text.” Have students annotate for bias, omissions, and framing. This builds critical literacy while sharing power.

- Document and share. Track what works. Submit concrete supplements to your department or district so effective practices scale.

Building a living, bias-aware curriculum culture

Curriculum does not end on adoption day. Treat it as a living system.

- Create an evolving supplemental library. Store vetted texts, tasks, and local case studies tagged by standard and subgroup access need. Rotate items quarterly to prevent stagnation.

- Schedule equity-focused PLC cycles. Each quarter, select one unit to examine for representation, language supports, and task demands. Bring student work; test a supplement; share results.

- Maintain student advisory panels. Twice a year, gather student feedback on relevance and challenge. Include students who typically dislike school—it will sharpen your insights.

- Build vendor partnerships for iteration. Share audit findings with publishers; request updates or co-develop local addenda. Some vendors will pilot revisions with your teachers.

- Celebrate exemplars. Highlight lessons where teachers integrated multiple perspectives, strong scaffolds, and disciplinary rigor. Make it normal to talk about bias mitigation as an instructional craft, not a moral failing.

Over time, the goal is cultural: bias-aware selection becomes standard operating procedure, not a special initiative. Each cohort of students moves through courses that are not just less biased but more intellectually honest, linguistically accessible, and powerfully engaging.

Curriculum choices are our quiet levers of justice and excellence. When we widen whose knowledge counts, calibrate language for access without lowering cognitive demand, and insist on accuracy over comfort, we do more than diversify examples—we unlock learning. The payoff shows up in stronger thinking, deeper reading, richer discourse, and, most importantly, in students who recognize school as a place built with them in mind.

Rate the Post

User Reviews

Popular Posts